|

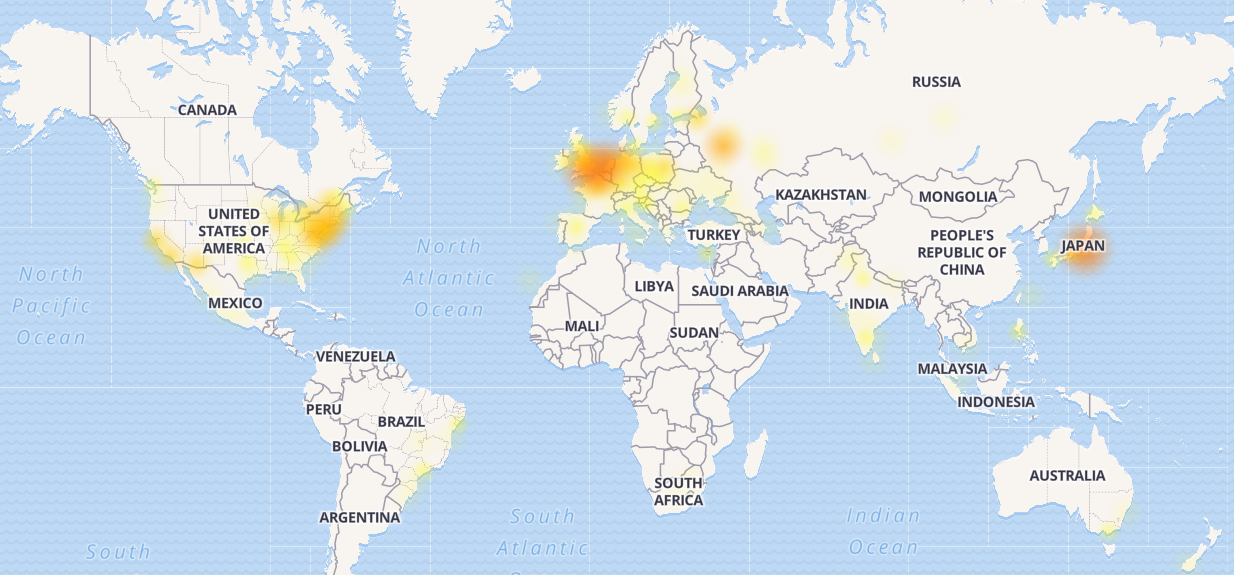

12/21/2023 0 Comments Slack outage june 2018 This constraint is in tension with the goal of autoscaling the webapp tier, which is to get new instances into service as quickly as possible. Very frequent reloads could lead to too many running HAProxy processes and poor performance. The process of reloading HAProxy involves creating a brand-new HAProxy process while keeping the old one around until it’s finished dealing with in-flight requests. The reason for this is that updating the host list via the configuration file requires reloading HAProxy. We don’t render our webapp host list directly into our HAProxy configuration file, however. We use Consul for service discovery, and consul-template to render lists of healthy webapp backends that HAProxy should route requests to.įigure 1: High-level view of Slack’s ingress load-balancing architecture We use a fleet of HAProxy instances behind a layer 4 load-balancer to distribute requests to the webapp tier. A few minutes later, we identified the problem. Multiple strands of investigations began, looking into both webapp performance and our loadbalancer tier. We very quickly noticed that a subset of the webapp fleet was under heavy load while the rest of the webapp instances were not.  :max_bytes(150000):strip_icc()/is-slack-down-or-is-it-just-you-4844437-01-slack-status-377cc74e19e74b46bbbd03578b741040.jpg)

We spun up a new incident response channel and the on-call engineer for the webapp tier manually scaled up the webapp fleet as an initial mitigation. We increased our instance count by 75% during the incident, ending with the highest number of webapp hosts that we’ve ever run to date.Įverything seemed fine for the next eight hours – until we were alerted that we were serving more HTTP 503 errors than normal. We autoscale quickly when workers become saturated, as happened here – but workers were waiting much longer for some database requests to complete, leading to higher utilization. As a result of the pandemic, we’ve been running significantly higher numbers of instances in the webapp tier than we were in the long-ago days of February 2020. Our CEO, Stewart Butterfield, has written about some of the impact of the lockdown and stay-at-home orders on Slack usage. One of the incident’s effects was a significant scale-up of our main webapp tier. We had some customer impact, but it lasted only for three minutes and most users were still able to send messages successfully throughout this brief morning incident. The change was quickly pinpointed and rolled back – it was a feature flag which performed a percentage-based rollout, so this was a fast process. The increased load on the database was due to a rollout of a configuration change, which triggered a longstanding performance bug. Our Database Reliability Engineering team was alerted about a significant load increase in part of our database infrastructure at the same time as our Traffic team received alerts that we were failing some API requests.

The user-visible outage began at 4:45pm Pacific time, but the story really begins around 8:30am that morning. We published a summary of the incident shortly after, but this story is an interesting one, and we’d like to go into more detail on the technical issues around it. On May 12, 2020, Slack had its first significant outage in a long time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed